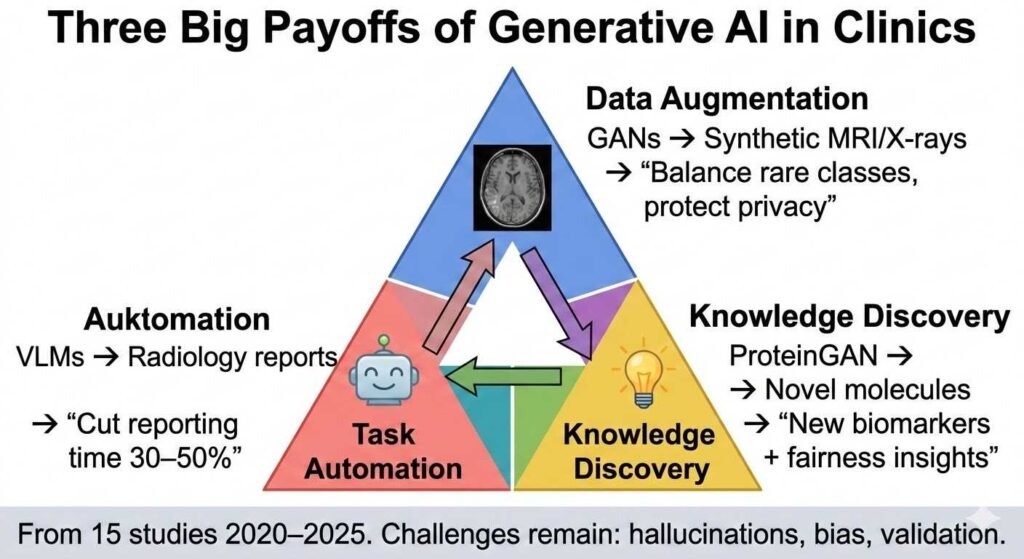

Three Big Payoffs from Generative AI in Clinics

Generative artificial intelligence (G‑AI)—including GANs, diffusion models, VAEs, and vision‑language models—has moved from proof‑of‑concept demonstrations to practical tools that augment radiology, dermatology, genetics, drug discovery, and electronic‑health‑record analysis. A mini‑review published in Frontiers in Digital Health (November 2025) synthesizes 15 representative studies from 2020–2025 that collectively illustrate three dominant trends for G‑AI’s near‑term clinical value: privacy‑preserving data augmentation, automation of expert‑intensive tasks, and generation of new biomedical knowledge.

Image‑centric work still dominates, with GANs, diffusion models, and vision‑language models (VLMs) expanding limited datasets and accelerating diagnosis. Yet narrative (EHR) and molecular design domains are rapidly catching up. Despite demonstrated accuracy gains, recurring challenges persist: synthetic samples may overlook rare pathologies, large multimodal systems can hallucinate clinical facts, and demographic biases can be amplified. Robust validation, interpretability techniques, and governance frameworks therefore remain essential before G‑AI can be safely embedded in routine care.

Payoff #1: Privacy‑Preserving Data Augmentation

Healthcare has long grappled with data scarcity, class imbalance, and privacy restrictions. Curating large, balanced, publicly shareable clinical datasets is expensive, logistically complex, and ethically sensitive. G‑AI offers a remedy by synthesizing realistic yet privacy‑preserving data.

Medical imaging is the prime test‑bed. Early work by Han et al. introduced “pathology‑aware” GANs to augment computer‑aided‑diagnosis datasets and train novice radiologists. Aydin et al. re‑engineered StyleGANv2 to generate three‑dimensional Time‑of‑Flight MR angiography volumes, boosting multiclass artery segmentation without additional patient scans. Pawlicka et al. used GANs to synthesize colorectal polyps, alleviating class imbalance and improving endoscopic segmentation accuracy. Ultsch and Lötsch fine‑tuned a latent Stable Diffusion model for melanoma detection, proving diffusion methods can rival GANs for dermoscopic realism.

Beyond pixels, G‑AI penetrates narrative and systemic domains. Alkhalaf et al. coupled a retrieval‑augmented Llama‑2 with zero‑shot prompting to summarize malnutrition risk from EHRs. Bordukova et al. exploited diffusion models to create digital‑twin patient trajectories, de‑risking costly clinical trials. Pinaya and colleagues generated synthetic chest X‑rays to lower the ethical burden of large‑scale training.

Quantified impact: Synthetic data often matches or exceeds real data performance. Classifiers trained on GAN‑augmented datasets reach AUROC ~0.75 vs 0.74 real; diffusion augmentation improves F1 scores by balancing rare classes. This payoff is immediate for rare diseases, under‑studied populations, and privacy‑sensitive settings.

Payoff #2: Automation of Expert‑Intensive Tasks

G‑AI is automating repetitive, expert‑heavy tasks that bottleneck clinical workflows.

Radiology reporting stands out. Phipps et al. explored VLMs that translate chest X‑ray features into free‑text reports, potentially reducing radiologist workload during high‑volume shifts. Their evaluation framework revealed efficiency gains but also hallucination risks—a reminder that factual grounding is critical. Huang et al. corroborated this in emergency workflows, showing both promise and evaluation challenges for text‑generating models.

Surgical and procedural support follows. Conditional GANs like TP‑GAN automate prostate brachytherapy planning, cutting time and variability while matching dosimetric quality. Generative models analyze surgical videos for annotations, training, and quality metrics.

Nursing and documentation benefit too. Voice‑to‑text, automated charting, and note summarization save nurses 95–134 hours/year in simulations; retrieval‑augmented LLMs draft patient portal replies, reducing mental load.

Key limitation: Synthetic or generated outputs often miss rare pathologies or encode bias, requiring human oversight.

Payoff #3: Generation of New Biomedical Knowledge

G‑AI is not just copying—it is discovering.

Molecular design accelerates drug discovery. Zeng et al. used ProteinGAN and hierarchical models to design novel proteins and small molecules. Khosravi et al. generated race‑aware radiographs to audit bias, surfacing fairness insights.

Hypothesis generation emerges. Generative models surface novel biomarkers, inequities, or molecular scaffolds that humans might overlook.

Early evidence: ProteinGAN candidates have advanced to trials; bias audits reveal demographic skews in pelvic imaging.

Challenges and the Path Forward

- Rare pathology gaps: Synthetic data misses subtle variants.

- Hallucinations: VLMs invent clinical facts.

- Bias amplification: Training data skews propagate.

- Evaluation gaps: FID/BLEU scores do not guarantee clinical utility.

Safeguards: Interpretability (StylEx), bias audits, external validation, transparent provenance.

Future trends: Text‑to‑3D surgical planning, education, management integration.