Generative AI Is Already in Real Clinical Workflows

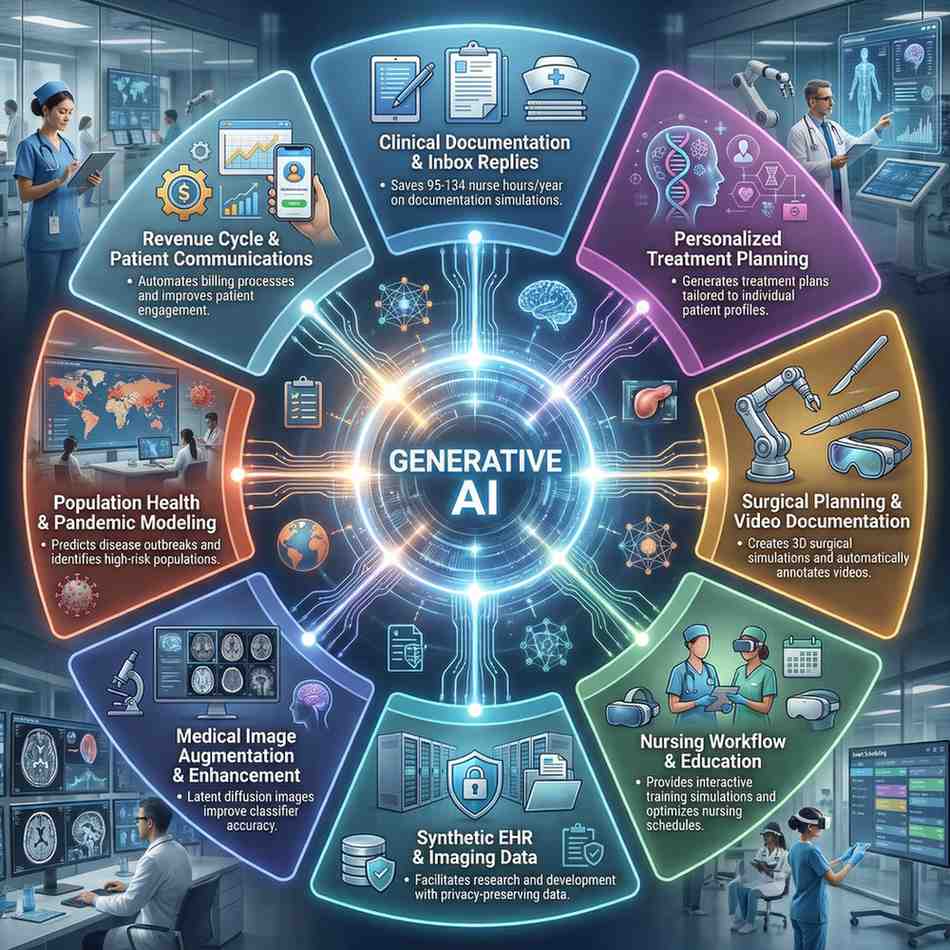

Generative AI is often talked about as a future technology, but in healthcare it has already crossed an important line: it is being deployed inside real clinical and operational workflows today. A 2025 review in Journal of Medical Systems catalogues a wide range of generative AI applications across clinical care, nursing, surgery, medical imaging, synthetic data, and population health. These are not just proofs of concept; they are early examples of how generative models can augment clinicians, reduce administrative burden, and open up new forms of data‑driven care.

Generative AI (GenAI) refers to models that can create new content—text, images, code, or structured data—based on patterns learned from large datasets, including large language models (LLMs), generative adversarial networks (GANs), variational autoencoders (VAEs), and related architectures. In healthcare, those generative capabilities translate into augmented documentation, synthetic clinical datasets, image synthesis and enhancement, personalized treatment suggestions, and scenario modeling for population health and pandemics.

Where Generative AI Is Already Being Used Clinically

The 2025 NIH‑indexed review organizes GenAI’s clinical roles into several key domains.

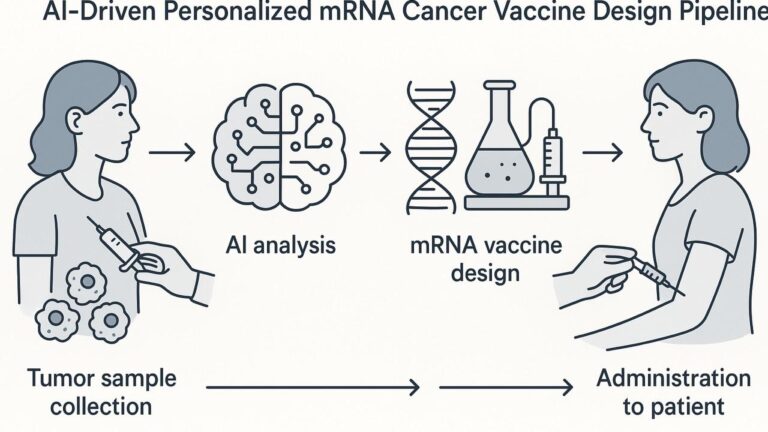

1. Tailored Treatment Plans and Precision Medicine

Generative models are being used to support personalized care by:

- Analyzing large health datasets (EHRs, imaging, omics) to identify patterns relevant for individualized treatment planning.

- Using GANs to simulate virtual patient populations so treatment effects can be explored across demographic and genetic subgroups, particularly when real data are sparse.

- Designing molecules tailored to specific biological pathways with models such as GENTRL, which generated a candidate drug that advanced to human trials in record time.

In oncology and radiotherapy planning, conditional GAN frameworks like TP‑GAN (treatment planning GAN) have been used to automate prostate brachytherapy plans, reducing planning time and variability across planners while maintaining or improving dosimetric quality.

Generative AI is also supporting pharmacogenomics by analyzing genotype–phenotype relationships and predicting likely individual responses to medications, which can guide dose and drug selection.

2. Surgical Care and Intra‑Operative Support

In surgery, generative and related AI models are being integrated from pre‑op planning through post‑op documentation.

- Pre‑operatively, GenAI can condense large volumes of patient data and literature into concise briefs, helping surgeons synthesize imaging, history, labs, and guidelines more efficiently.

- Intra‑operatively, early systems use AI to analyze surgical video streams and generate real‑time annotations or feedback, with the goal of supporting training and quality metrics.

- Post‑operatively, companies like Johnson & Johnson’s MedTech unit, in collaboration with Nvidia, are using AI to automate parts of surgical video analysis and documentation, reducing manual dictation burdens.

Robotic platforms such as the Da Vinci system and research systems like STAR (Smart Tissue Autonomous Robot) illustrate how AI‑driven control and planning can enhance precision in minimally invasive procedures, though fully autonomous surgery still requires human oversight.

3. Reducing Provider Burnout Through Documentation and Inbox Support

Burnout has become a crisis for physicians and nurses, driven in part by documentation and inbox overload. Generative AI is being used to address this in several ways:

- Drafting patient‑message replies: Studies show that GPT‑4 can generate high‑quality, empathetic draft responses to patient portal messages; using such drafts improved clinicians’ mental task load and reduced work exhaustion in at least one trial.

- EHR documentation support: Voice‑to‑text, automated charting, and note summarization can shave 21–30% off documentation time for nurses, amounting to 95–134 hours saved per nurse per year in simulation studies.

- Reducing EHR friction: Because dissatisfaction with EHRs is strongly associated with burnout, GenAI‑based summarization and data‑surfacing can help clinicians spend more time interacting with patients instead of screens.

These tools are still being refined and monitored for safety, but the early evidence suggests real potential to reduce cognitive load and free up time for direct care.

4. Nursing Workflows and Education

The review also highlights nursing as a major application area.

- AI‑enhanced simulations create realistic, dynamic training cases for nursing students, allowing them to practice complex scenarios without exposing patients to risk.

- Clinical tools can help forecast falls, automate documentation, and streamline admissions, transfers, and discharge processes—saving an estimated 32–40 hours per nurse annually in administrative workflows.

- Systems like the A+ Nurse digital assistant in Taiwan combine generative models with workflow tools to automate routine tasks, improve team communication, and bring current evidence directly into nursing care.

GenAI chatbots can also offer social support and coaching to patients when human resources are constrained, especially in mental health and chronic disease contexts.

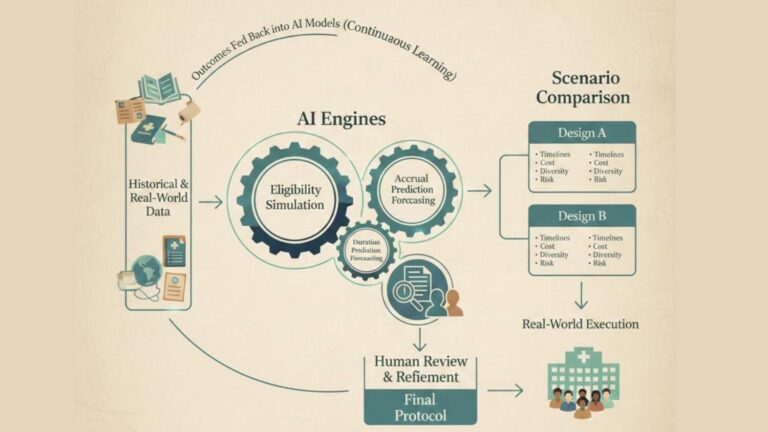

Synthetic Data: Training AI Without Touching Real Patients

A major bottleneck in healthcare AI has always been data access: clinical data are sensitive and often fragmented. Generative models, especially GANs and VAEs, are now being used to create synthetic clinical data that approximate real distributions without exposing individual records.

Key examples include:

- Synthetic EHRs and claims data: Architectures like CorGAN use convolutional GANs and autoencoders to model correlations between adjacent medical features and generate realistic synthetic tabular health records.

- Augmenting scarce datasets: Synthetic chest radiographs generated by latent diffusion models have demonstrated that augmenting real training data can improve classification performance for certain tasks.

- Improved prediction tasks: Synthetic data created with Gaussian copulas, conditional GANs, VAEs, and Copula‑GAN have been shown to enhance non‑invasive diabetes prediction models when combined with real data.

- Task‑specific text data: Carefully prompted LLMs like ChatGPT have been used to generate synthetic biomedical text that improves performance on named entity recognition and relation extraction tasks when combined with real corpora.

Startups such as MDClone already offer synthetic data platforms that enable researchers to explore health-system data while staying within privacy regulations like HIPAA and GDPR.

Generative AI in Medical Image Analysis

Generative models are proving particularly useful in medical imaging, both for training and for clinical tasks.

- Image synthesis and augmentation: GANs can generate realistic MRI, CT, and retinal images, enriching datasets for training diagnostic models—especially in domains where rare conditions or subtle findings are under‑represented.

- Enhancement and reconstruction: GANs and related models can enhance low‑dose CT images, improving quality while enabling lower radiation exposure, and reconstruct undersampled MRIs to reduce scan time.

- Anomaly detection and segmentation: Architectures like AnoGAN and GAN‑based segmentation networks have achieved high Dice scores (for example, ~0.89 on brain MRI), outperforming some traditional approaches and aiding early detection tasks.

These capabilities are beginning to appear in clinical workflows as adjunct tools for radiologists, not replacements, offering better training data, improved image quality, and automated pre‑reads.

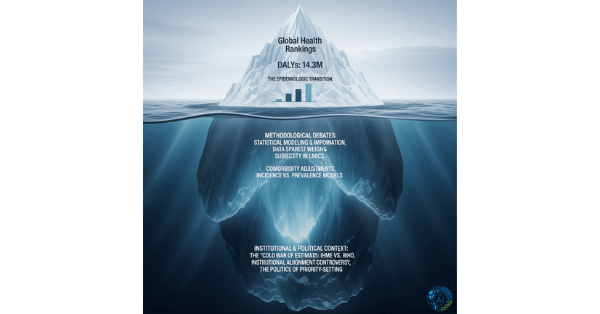

Supporting Population Health and Pandemic Preparedness

Looking beyond individual patients, generative and predictive AI tools are starting to shape population health and outbreak preparedness.

- Deep learning models interpret biological and EHR data to predict disease risk across populations, enabling more targeted preventive interventions.

- Integrating individual health data with “socio‑markers” (quantified social determinants) improves risk stratification and surveillance, especially when combined with mobile and IoT data streams.

- During COVID‑19, AI supported forecasting, outbreak detection, vulnerability indices (such as the UK’s QCOVID3 and Australia’s CPVI), and policy planning; similar architectures can be adapted for future pandemics.

GenAI’s ability to synthesize reports, scenario narratives, and communication materials also helps public health teams communicate complex risk information more efficiently.

Non-Clinical Uses: Education, Revenue Cycle, and Marketing

The same generative capabilities are affecting the “business” and educational sides of healthcare.

- Medical education: GANs and VAEs generate synthetic images and cases for training; LLMs help with literature review, question generation, and personalized learning resources.

- Revenue cycle management (RCM): Generative models support automated coding, denial prediction, and patient‑friendly communications, with one Deloitte‑linked study estimating potential time savings of 41–50% across RCM stages.

- Healthcare marketing and PR: GenAI systems craft tailored content, automate first‑line customer interactions, and analyze which messages and formats resonate most with different patient segments.

These non‑clinical applications may not appear at the bedside, but they influence sustainability, access, and patient engagement across the system.

Challenges and Guardrails

Despite the momentum, the 2025 review stresses several challenges:

- Data quality and bias: Generative models can amplify biases in training data, especially if minority groups are under‑represented.

- Hallucinations and factuality: LLMs can produce plausible‑sounding but incorrect content, which is dangerous in clinical contexts.

- Privacy and re‑identification risk: Synthetic data must be carefully evaluated to ensure they do not leak identifiable information.

- Regulation and validation: Most GenAI applications still lack large‑scale prospective trials linking use to patient outcomes.

- Workforce readiness: Effective use requires training clinicians in prompt design, critical AI appraisal, and governance.

Guidelines from bodies such as the FUTURE‑AI consortium emphasize transparency, robustness, fairness, and human oversight as essential preconditions for safe deployment.